Link-based testing for toxicity is something i've used for years.

It's one of the reasons my service has remained successful and why people continue to rank with it. I don't assume my stuff works, I need to know that it works.

Whether you're testing PBN posts, guest posts, insertions, or really any other link type. The goal is to determine whether or not the link is going to have a positive (pass) or negative (toxic) impact on your rankings. There are a number of factors that go into ranking; Having a list of domains or even providers that test their sites means that you should only see gains with proper anchor text selection.

Typically if you buy links from providers, like me, you want to ensure they are performing these types of tests. Nothing sucks worse than having toxic links going to your site resulting in lost ranks, time, and revenue.

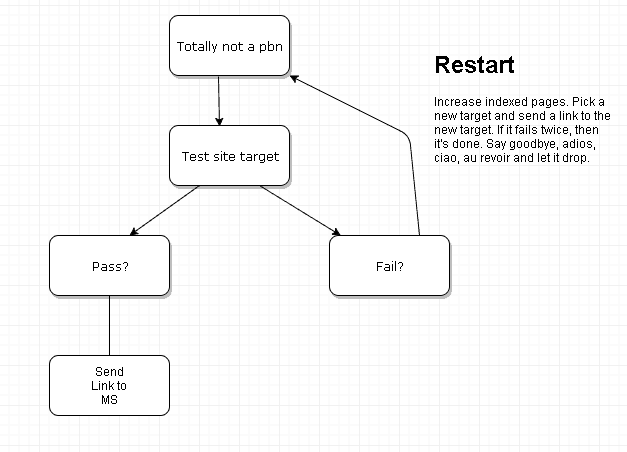

Essentially this is what we are doing:

You can define a link as being toxic if it has a negative impact on rankings. Meaning your rankings decrease after placement of the link.

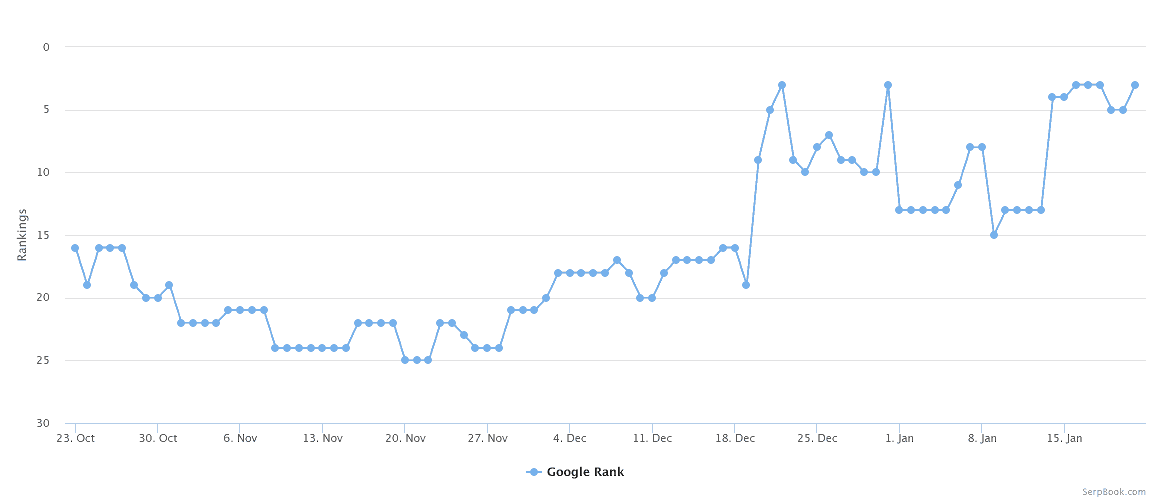

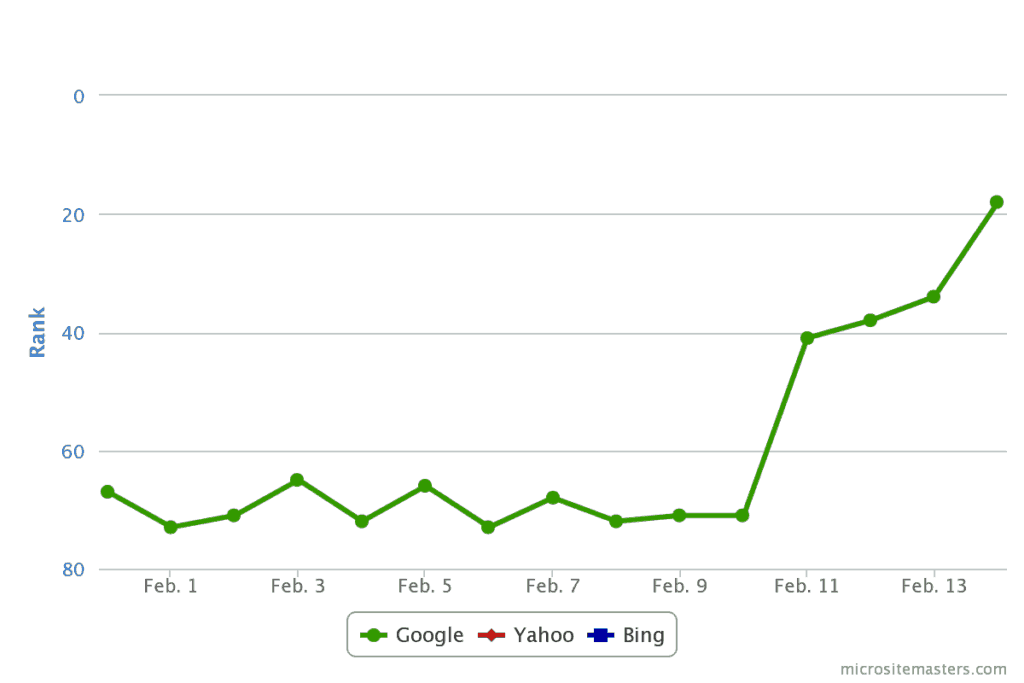

Here is an example of a positive test for blogs being added to my service:

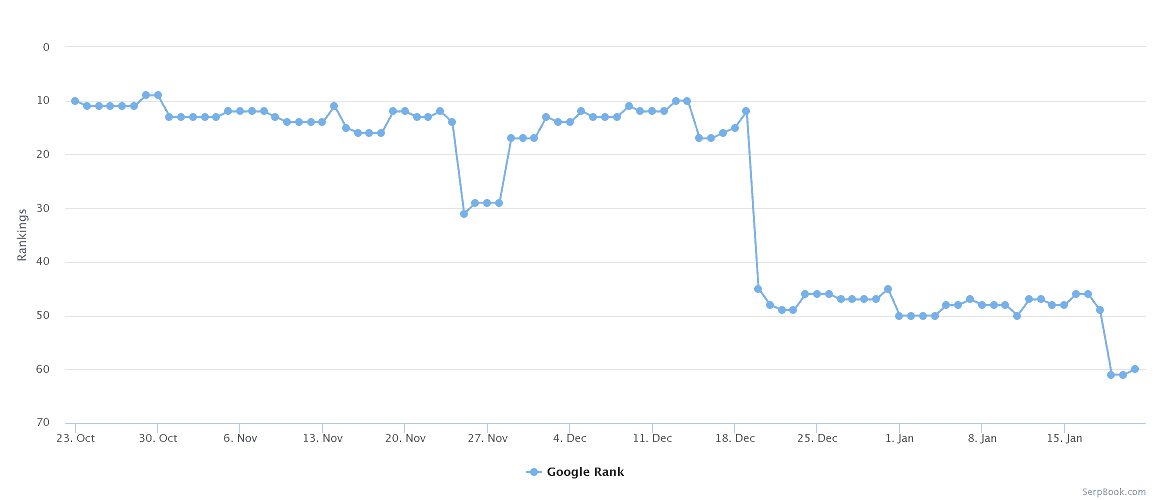

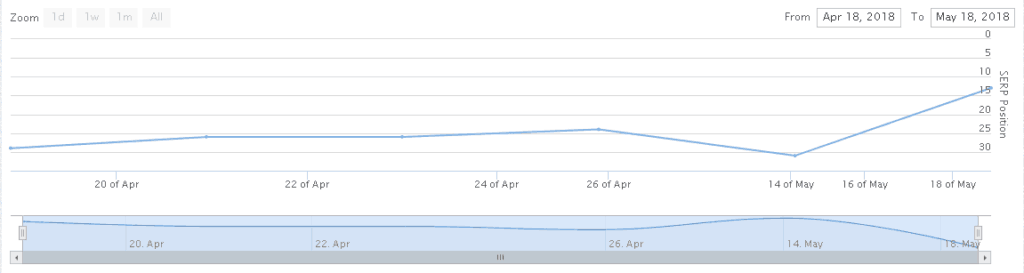

Here is an example of a negative link test.

What you see above is what myself and a buddy call “draining.”

Imagine your money site (MS) is like a bottle of water or a cup of juicy juice, 100% juice. If a link is toxic, your site doesn’t want to drink it, let’s face it, bad Kulaid sucks. Instead, it starts to drain. Above you see this happen gradually, and then down the rabbit hole she goes.

There are a number of factors to consider when setting up your test. Things like money site targets/niche selection, anchor selection, setup, and more. I’ll address a couple of common questions and what to do in certain situations both pre and post-test.

I’m assuming at this point you have a domain ready to be tested. The domain should be setup, hosted, and indexed.

Note: At this stage, your site does not need to look pretty, think 2012 PBNs. A simple skeleton WordPress site with possibly a unique theme and full post on the homepage. There is no logic in having a site fully setup and looking nice and fancy when it’s toxic.

If you set them up yourself, you’re wasting your time. If you pay to have them set up, you’re possibly wasting money. At this point, all we are doing is testing the site and the above will get the job done.

This is probably my least favorite part of this entire process.

I’m not a big believer in niche-based relevancy. To test your blogs, you shouldn’t be either. The goal here is just to see if your network site is passing positive link juice.

All my targets are local in nature. Meaning niches like carpet cleaning in Yorba Linda, tree removal in Tulsa, DUI lawyer in Miami, etc. So essentially a local service + a populated city.

The reasoning for using local sites to test with is simply because local is easier to rank in comparison to e-com, affiliate, and authority sites. E-commerce and affiliate-style niches have several variables and the goal is just to see if OUR link is causing the SERP movement. There should be no doubt that your link caused the movement. Meaning we are trying to eliminate as many false positives as possible.

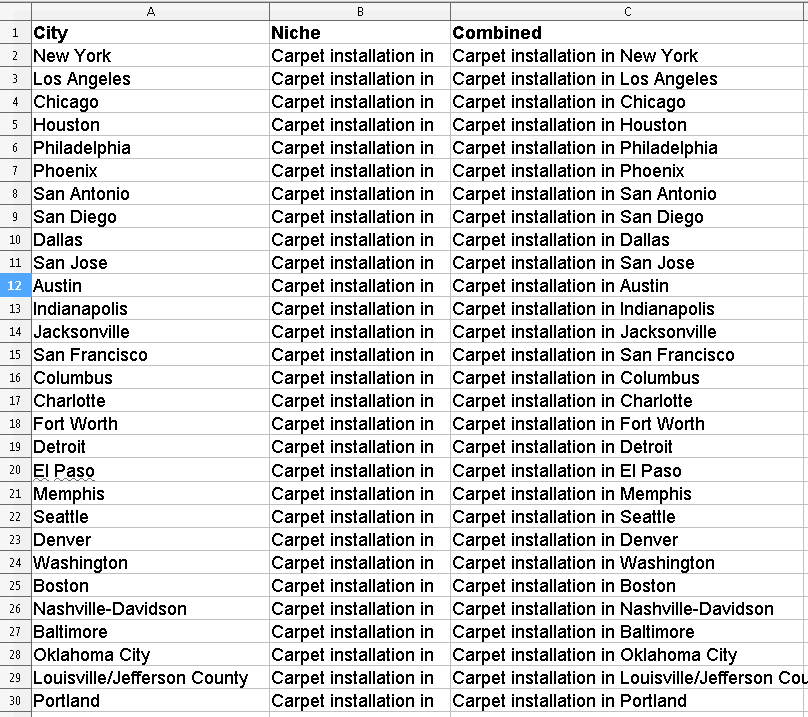

The first thing you need is a list of cities:

You then need a niche. You can google “local niches” and there are likely thousands of ideas. Pick one and that’s what you will be using for all of your target pickings. In this case, I opted for “carpet installation.”

Here is what my excel spreadsheet looks like:

In column “A” we have the city. In column “B” the niche and in column “C” the niche and the city combined. The last column is simply =CONCATENATE(B2, A2), and then dragged all the way down.

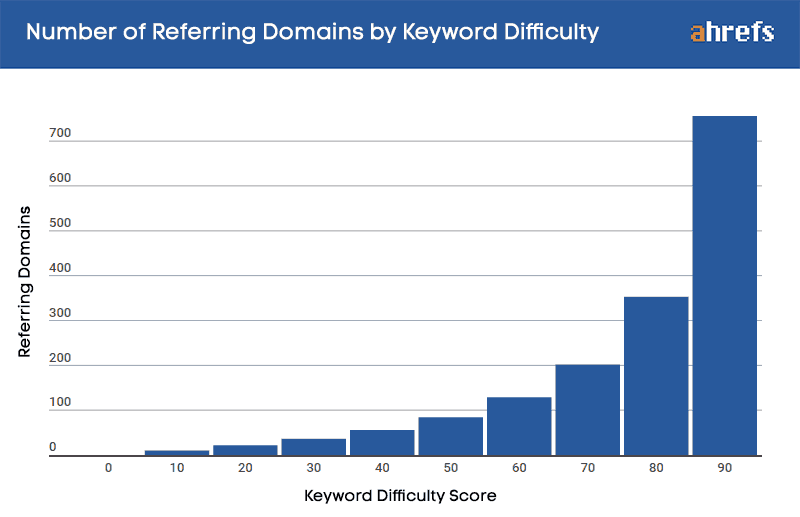

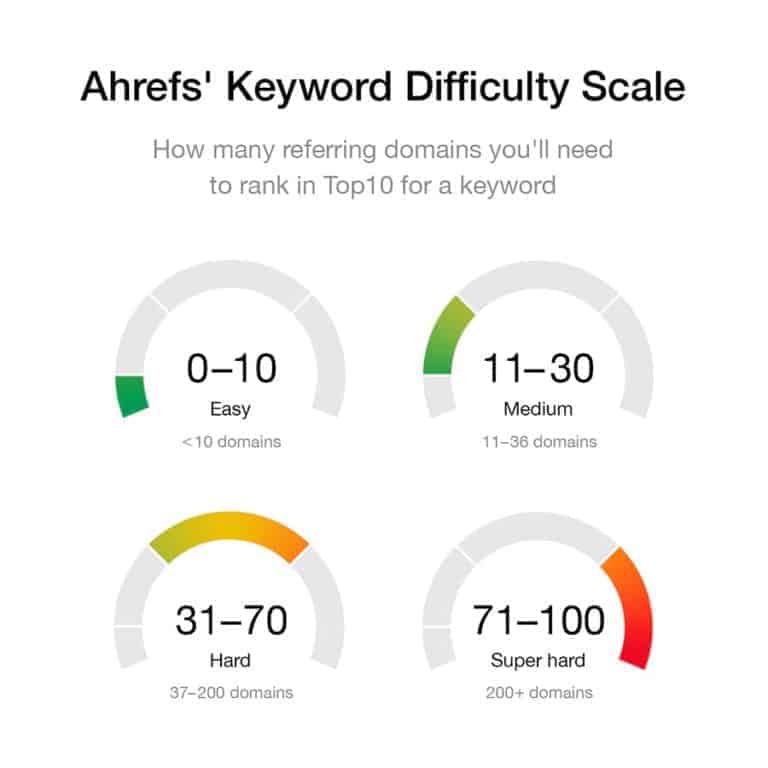

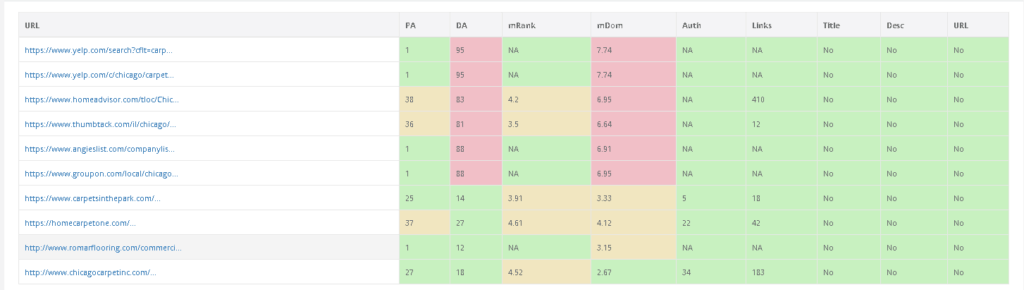

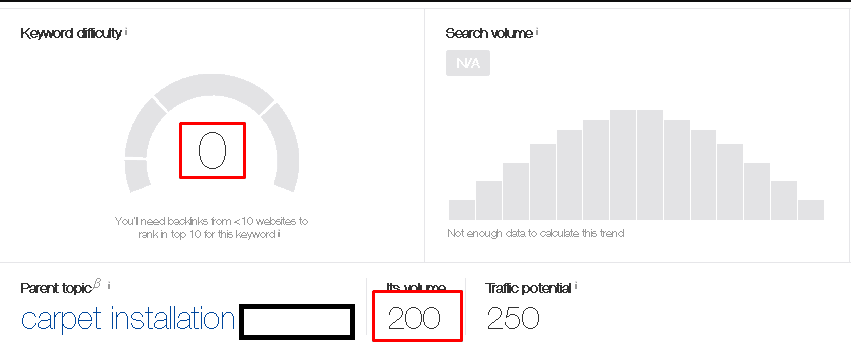

Now that we have a way to find a target, we need to analyze these keywords and decide whether or not they are best suited for our testing needs. For this, we are going to be using Ahrefs keyword explorer. Essentially we want sites with a Keyword Difficulty (KD) of less than 5.

Why less than 5?

Simply put, the keyword is very easyto rank and we should see SERP jumps that give you a clear idea that you are causing the movement.

The next step is to stick the “Niche+City” portion into ahrefs. I tend to search for only the service + city like this: Carpet Installation in CITY.

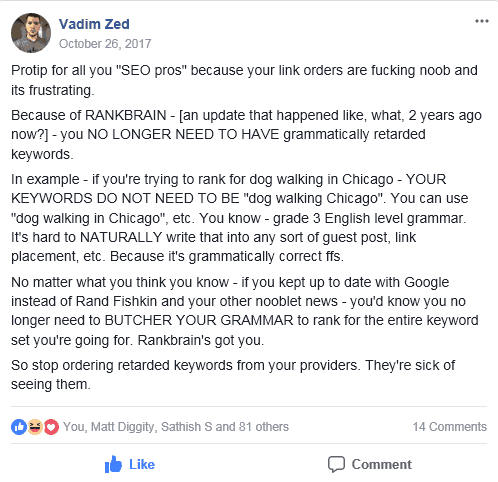

Rant While I Have You: It’s 2019. Google is pretty smart. You don’t need to use broken English in your anchors just because something is tool-assisted and says that the “in” version doesn’t have volume. Carpet installation in City is the same as Carpet installation City. One is actually grammatically correct.

I’ll let Vadim explain this for me, he does it better.

This is what I mean.

I use both Keysearch.co and Ahrefs for keyword research. Comparing these two:

These SERPs are nearly identical. Don’t be afraid to use proper grammar in your anchors. I’ll love you, Google will love you, and your site will love you.

As I said, the criteria is a KD less than 5. Typically I’ll take a 0 if we can get it and a volume of 50-200 works best. Basically grab your list you created above and start to simply plug and chug till you get one that meets the criteria.

The next thing to do is actually pick the site. There are two schools of thought for this.

I prefer the latter.

My reasoning is that some sites are just stuck on page 2. I’m not here to attempt to get a site unstuck or diagnose the reason for why the site is there. Moving from page 5+ to page 2 is typically easier than moving from page 2 to page 1 on a site that you don’t know the exact history of.

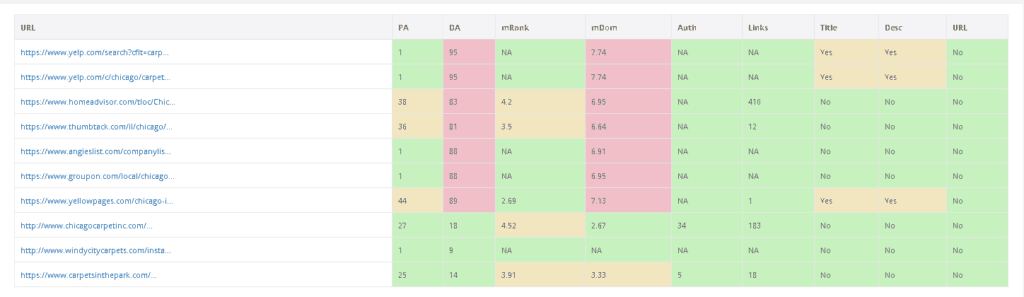

What to Look For?

Look for HTML sites that look like they are from the early 2000’s, they usually meet the criterion.

Note: Don’t be super anal-retentive about picking a site. The above is just a general idea of what to look for.

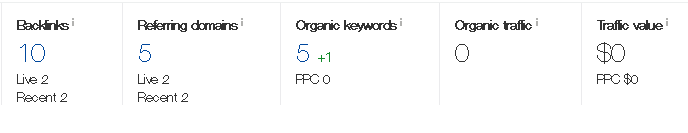

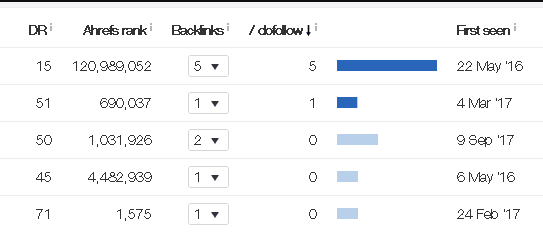

I went through the SERPs and found this site:

Overall this site is a solid test site target. The meta features the locale. The title features “install” and “carpet.” The site hasn’t received any links in 3 months and the anchor profile has no money target anchors, only brand, generic, and naked urls.

| Anchors | % |

| Naked URL | 40% |

| brand name, llc | 20% |

| naked URL variation | 20% |

| Generic | 20% |

Add the URL and KW to your tracker. Usually, if you have this planned out properly you can track the target site for a week and get an idea of how it moves around in the SERPs for the keyword. Although if you opted for the method above and decided to go to pages 5 to 8, then moving to say page 2-4 would mean your link likely caused that jump.

Either order an article from a writer or spin something up. Spinning is the cheaper option in the grand scheme of things, but if you don’t mind paying someone for a $5 throwaway article then opt for that. You may even consider theming the article to the niche of the site. Say your PBN is about travel; theme your article to be about where to stay when you travel, have the writer mention carpets vs hardwood flooring and work the content in.

“It was hard to find someone for carpet installation in City, our hometown, but we finally did and it worked out great. Unfortunately for us, our airbnb had hardwood floors so we had to ensure we were careful not to scratch it…”

Network site metrics:

I know most people will ask for this so i’ll provide them.

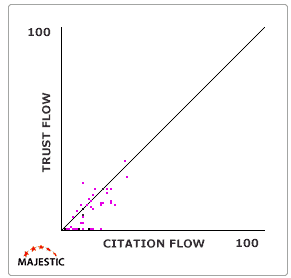

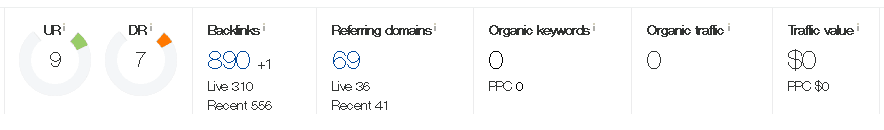

Again this is something I’m not a big fan of. I don’t really look at “metrics” when I’m buying or using network sites. When I’m on a scale and I need to filter sites I use Majestic graphs + ahrefs to filter. Once they are down to a manageable amount I look at their link profile. If their links are solid, then they should work. Typically if the graph is similar to the one below, and they have a decent number of referring domains with contextual links you are going to see gains. That’s also why you don’t see me advertising tons of metrics on my gig.

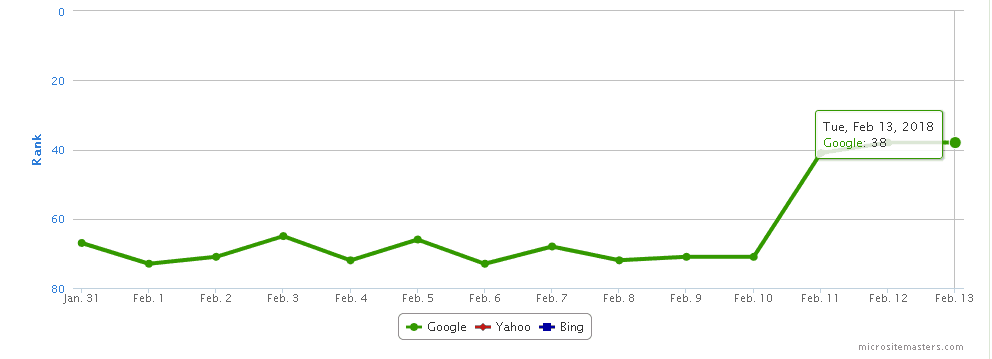

So this part can be the worst. I’ve had movement as fast as 24 hours and I’ve also had movement take as long as 3-4 months. I wrote this article over several weeks on and off and on 1/31 is when I posted the test article on a network site that I forgot I owned.

So the placement was on the 31st. The PBN was sitting there since August/September 2017 when it was set up. Indexing took a while and Google cached the update on 2/9. We can see a jump from high 60-70s to 38th on Micrositemasters. Usually,I’lll double check on whatsmyserp as they usually have the most updated rankings in comparison to most trackers.

As expected the next day I check and the ranking is now 18th on Micrositemasters. That’s typically why the search is run on whatsmyserp just to double check.

Overall movement: High 60-70s to 18th. Not bad for one link.

Whenever I have a site drain or just simply fail completely I will run a re-test. Maybe you missed something when picking your target or overlooked something. This time we are going to increase the number of indexed pages and pass a possible filter that’s causing you to fail.

When running tests myself and a buddy started to notice that certain sites where we had greater than 10 pages indexed, our sites would pass. This included re-tests. What we decided to do was grab some archived content from archive.org, as well as new articles created in order to increase the number of indexed pages.

In the test site above I only had 8 pages indexed and it passed, if it had failed I would have created 5 more posts about whatever niche the site is about or went into archive.org and repurposed the old content.

To accompany my new articles are also new “authority” links. I go to Google news and search for my keyword, in this case, “carpet installation”, or i’ll use Ahrefs content explorer to find some more links for the articles. This looks better than using Wikipedia links that everyone uses.

Once this is done I wait a week or two for the content to index. I’ll restart the process of finding a new niche and a target site, post the the link and wait for gains.

I’ve had this happen where the sites just don’t pass. There may be more filters, but I personally don’t know them. I simply remove the hosting and let them drop and move onto the next site.

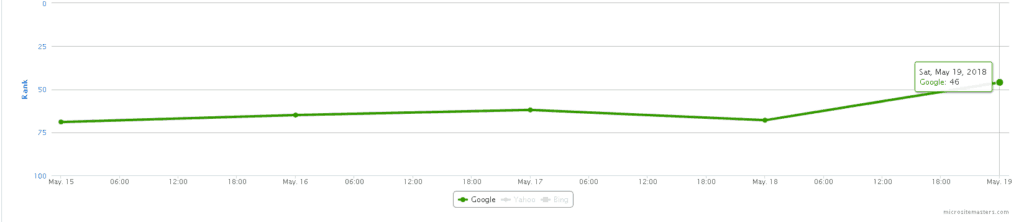

The following is an example from my personal network. This is a prime example of why a retest is performed and why a possible filter is in place.

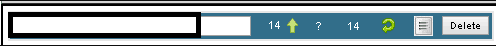

The original test is now passing because of the addition of a new post (new test). The old test increases to 13th the same day the new test passes. Indicating to me that a filter was in place on this site.

Hopefully, this provided some background on how most providers go about toxicity testing. No matter how you slice it, it’s better to test something and know that it works rather than to guess and hope for the best.